Toshiba Develops AI with Three-Dimensional Recognition that Measures Distance Using

a Commercially Available Monocular Camera with the Accuracy of a Stereo Camera

-Higher Accuracy, Lower Cost, and Smaller with Deep Learning for Use in Robot Picking,

Autonomous Operation of Unmanned Delivery Vehicles,

Remote Control Drone Inspections of Infrastructure, etc.-

Toshiba Corporation

TOKYO─Toshiba Corporation (TOKYO: 6502) has developed AI with 3D recognition that is capable of measuring distance with the accuracy of a stereo camera(Note 1). It does this by using an image taken with a commercially available camera and applying deep learning analysis to image blurring caused by the camera lens. Eliminating the need for a stereo camera enables to reduce both cost and space.

Toshiba will present the achievements of this AI at the International Conference on Computer Vision (ICCV2019) to be held in South Korea on October 30, 2019 from 10:00 am.

In recent years, image sensing has become increasingly important in many sectors: robots moving objects, autonomous unmanned vehicles; remote-controlled drones inspecting infrastructure, and more. Applications like these require more than just images of the subject; they need a small device to analyze 3D data, includes shape and distance. In response, a lot of attention has been given to the development of measurement technology using monocular cameras, which are easier to miniaturize. Research is increasing focusing on the use of deep learning to improve distance measurement technology using monocular cameras to better learn the shape, background, and other scenery data of the imaged objects.

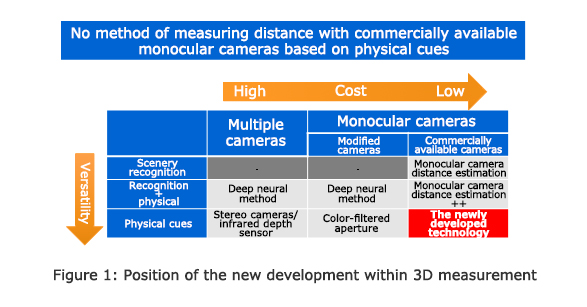

There is a drawback, however; the accuracy of distance estimation with a monocular camera depends on learned scenery data, and accuracy drops considerably for shots taken in a different landscape. To eliminate this data dependence, Toshiba has developed color-filtered aperture photography(Note 2,3,4). With this technology, two color filters are attached to the lens, and the color and size of the resulting image blur are analyzed according to the distance from the subject. While this approach solves the data dependence issue, it costs time and money to modify existing lenses (Figure 1).

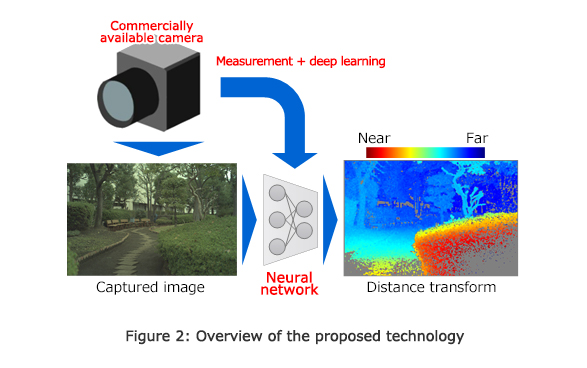

Toshiba has overcome this problem by developing AI with 3D recognition technology that uses deep learning to analyze how the image is blurred (the shape of the blur) according to its position on the lens, in order to achieve distance measurement with the same high precision as a stereo camera system, with a normal monocular camera but without any need for scenery data (Figure 2).

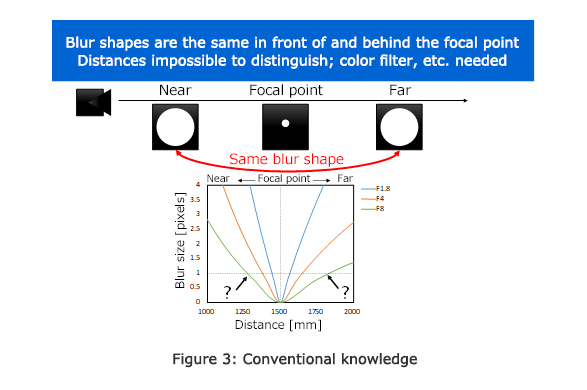

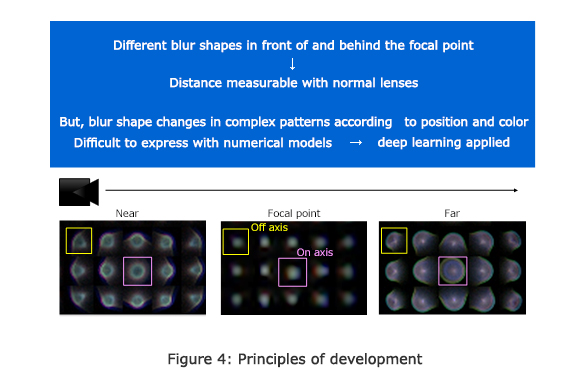

Until now, it was considered theoretically too difficult to measure distance based on the shape of the blur, which is the same for objects both near and far when they are equidistant from the focal point (Figure 3). However, analytical results revealed a substantial difference between the blur shapes of near and far objects, even when equidistant from the focal point (Figure 4). With that, Toshiba successfully analyzed blur data from captured images by a deep learning module trained with a deep neural network model.

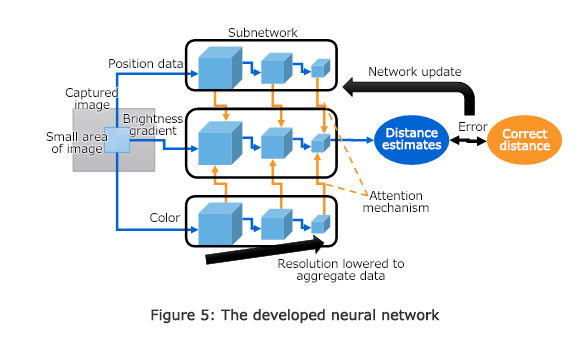

When light passes through a lens, the shape of the blur created is known to change depending on the light's wavelength and its position in the lens. In the developed network, position and color data are processed separately to properly perceive changes in blur shape, and then, after passing through a weighted attention mechanism, are used to control where on the brightness gradient to focus in order to correctly measure the distance (Figure 5). Through learning, the network is then updated to reduce any error between the measured distance and actual distance.

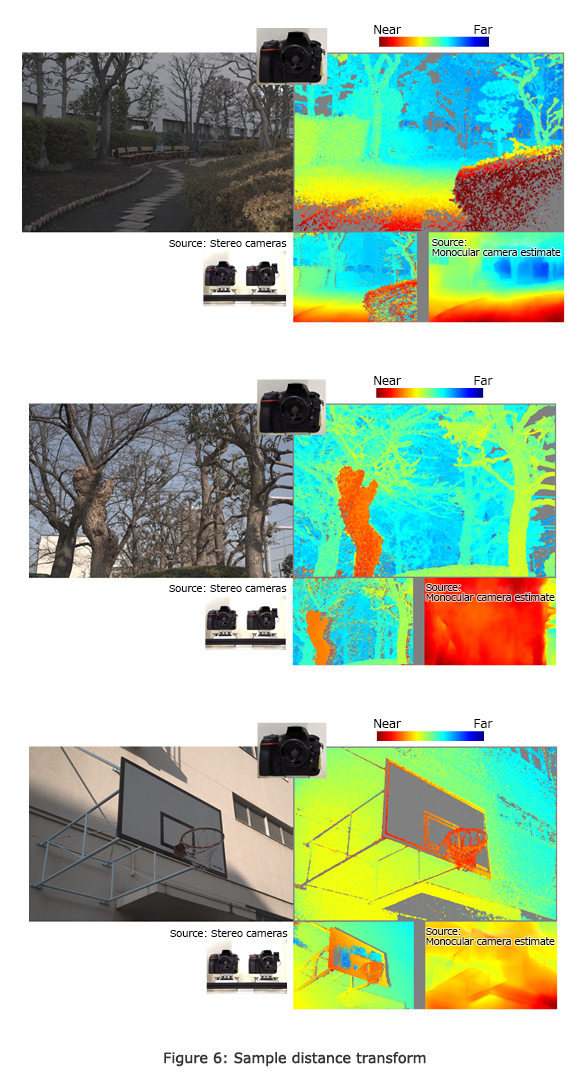

Using this AI module, Toshiba has confirmed that a single image captured with a commercially available camera realizes the same distance measurement accuracy secured with stereo cameras.

Toshiba will confirm the versatility of the system with commercially available cameras and lenses and speed up the image processing, aiming for public implementation in fiscal year 2020.

- (Note 1)

- Cameras that simultaneously capture an object from multiple directions to also record depth information. Usually refers to a singular unit that can reproduce binocular vision and take three-dimensional images in which three-dimensional space can be recognized.

- (Note 2)

- Y. Moriuchi, N. Mishima; "Depth from Asymmetric Defocus Using Color-Coded Apertures", 22nd Symposium on Sensing via Image Information, IS1-34, 2016

- (Note 3)

- N. Mishima, T. Sasaki; "Imaging Technology Accomplishing Simultaneous Acquisition of Color Image and High-Precision Depth Map from Single Image Taken by Monocular Camera", Toshiba Review, Vol. 73 No. 1, 2018

- (Note 4)

- N. Mishima et al., "Physical Cue based Depth-Sensing by Color Coding with Deaberration Network", 30th British Machine Vision Conference, 2019